| This is intended for those who know about terrestrial/ nature photography and are interested in the difference in technique and equipment between “regular” and astrophotograpy. Below are some pictures of typical amateur astronomy equipment. I believe what nature photographers call a “camera” is the combination of a box with an imaging sensor in it and a lens. For astronomers “camera” means just the box with the imaging sensor. We call the “lens” a “telescope.” |

| Astroimaging |

| Top |

| The telescope (the black tube) is about 15 inches in diameter and about 3 feet long. The white writing on the tube is about 6 feet off the ground (as high as the top of your head). The mount controls/points the telescope at whatever you want to image and, most importantly, keeps the Note all the cables and wires. You don't stand outside all night doing this. Everything is automated/robotic. The cables connect to one (or more) computers (for me inside the garage) which point the scope, switch it from target to target during the night, automatically focus, control the temperature of the camera (and more) |

| Here is a closeup of the back of the scope. The big tan device is the main camera. The chip is only about the size of the chip in a 35mm camera (some astro cameras have larger chips, some smaller). The camera is so large: a) because it is a monochrome camera (see below) and gets color images by rotating color filters in front of the imaging chip. That large disk at the front of the camera contains a whieel with multiple filters of different colors b: Because it is cooled to anywhere from -20C to -40C to reduce noise. The box contains "stuff that cools the chip". Professional astronomers cool their camers with liquid nitrogen to -273C |

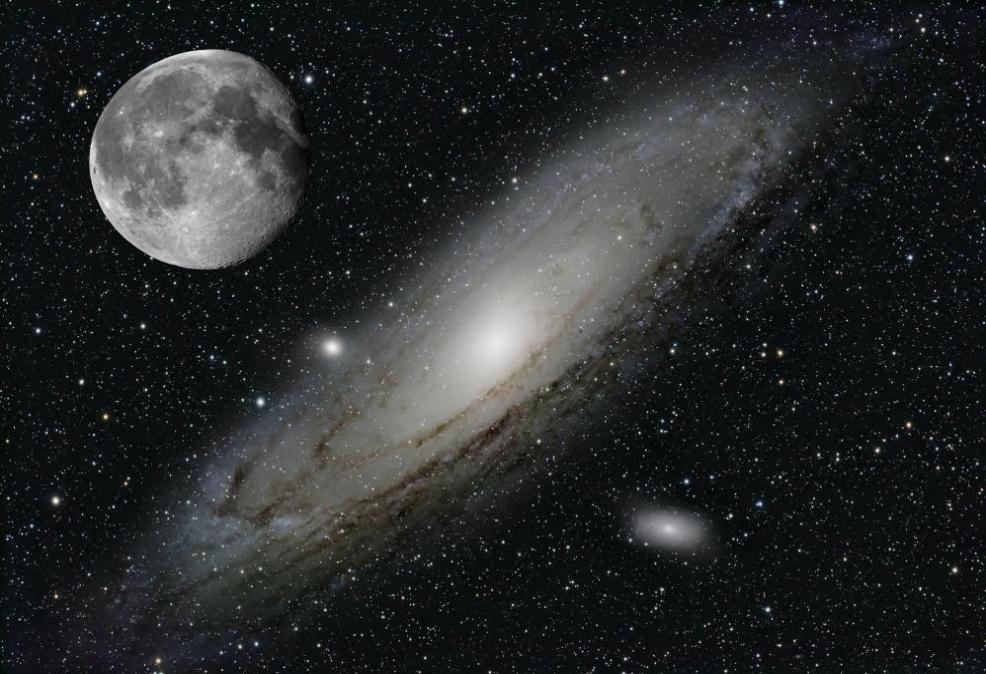

| Just as photographers have different lenses (wide angle, telephoto) we use different telescopes for similar reasons. Some are to take images of wide areas of the sky, others to take very high resolution of small objects. Most people think the main purpose of a telescope is to magnify small things but a more important reason is to gather lots of light so as to see very dim objects, which may not be small at all. The following two images are of a galaxy (M31) which is similar in size and shape to our own galaxy and “nearby” as such things go (about 2 million light years away – right next door) and an image of a collection of glowing gas where new stars are forming in Orion: |

| M31 |

| Now look at those same images with an image of the full moon superimposed on them for scale (the moon was not really in this location, I have just superimposed it at the same image scale: |

| The objects are not small. They are much larger than the full moon. The reason you can't see them without a telescope (actually you can see M31, barely, but only as a hazy area of light, not as a galaxy with arms and dust lanes) is not because they are small but because they are very dim. You need a telescope is to gather enough light to see those dim objects. |

| We gather dim light in two ways: a large lens or mirror (the diameter of amateur scopes run from maybe 60mm up to 400 or 500mm in diameter, or “long exposure” (hours, sometimes many many hours). We don’t capture all those hours at once. You can capture single exposures for maybe 5 to 30 minutes per exposure but do that over and over all night or across many nights to finally get an image with many hours of exposure. Here is the “Elephant’s Trunk Nebula. The total exposure time was 42 hours over several nights: |

| Our cameras differ from standard cameras in a couple of ways. Typical cameras (Nikon, Canon) are color cameras. The imaging chip has the pixels (subunits of the sensor) covered with small lenses of red, green and blue glass in an alternating pattern (typically G G B R since your eye is more sensitive to green than to red or blue). That has the advantage of getting a color image in one exposure, but it costs resolution. The red in what you are imaging is only captured in a fraction (for green one half, for red or blue only 1 /4) of the pixels so you lose fine detail. Astronomical cameras are monochrome. We get color by having a colored glass filter in front of the entire chip. This way with a red filter in front of the chip every single pixel captures red data. There is a motorized wheel with multiple filters (red, green, blue and more). We first take a red image, then a blue, then green. The cost of this method is having to take multiple images to get full color. But our objects aren’t moving (well, not appreciably) so you can take one image then a second 10 minutes later and see the same galaxy. Another advantage of the filters is that where a color camera can only capture red-green-blue we can capture all sorts of colors. Infrared, ultraviolet, or the specific colors of glowing gases. If you excite hydrogen for example with radiation from the surrounding stars it glows in a specific color (deep red) and that color is in a very narrow band (i.e it is all exactly the same wavelength with no spill to slightly different colors.) Other elements glow in other colors when excited with radiation. Oxygen is greenish blue for example. One filter can capture the characteristic color of glowing hydrogen using a very narrow filter that essentially only shows glowing hydrogen and almost no background glow from surrounding stars or dust. Another filter can then capture maybe excited, glowing oxygen etc. That image of the Elephant’s Trunk was taken using such filters. Sulfur (which glows an even deeper red than hydrogen) is shown as red, hydrogen as green, the oxygen as blue. In other words the colors are mapped in the same order as their natural wavelengths but spread out more (so sulfur and hydrogen are not both red). That color is the “Hubble Palette” used by the Hubble space telescope. Thus what you are seeing is a map of how those various gases are distributed in the gas cloud. |

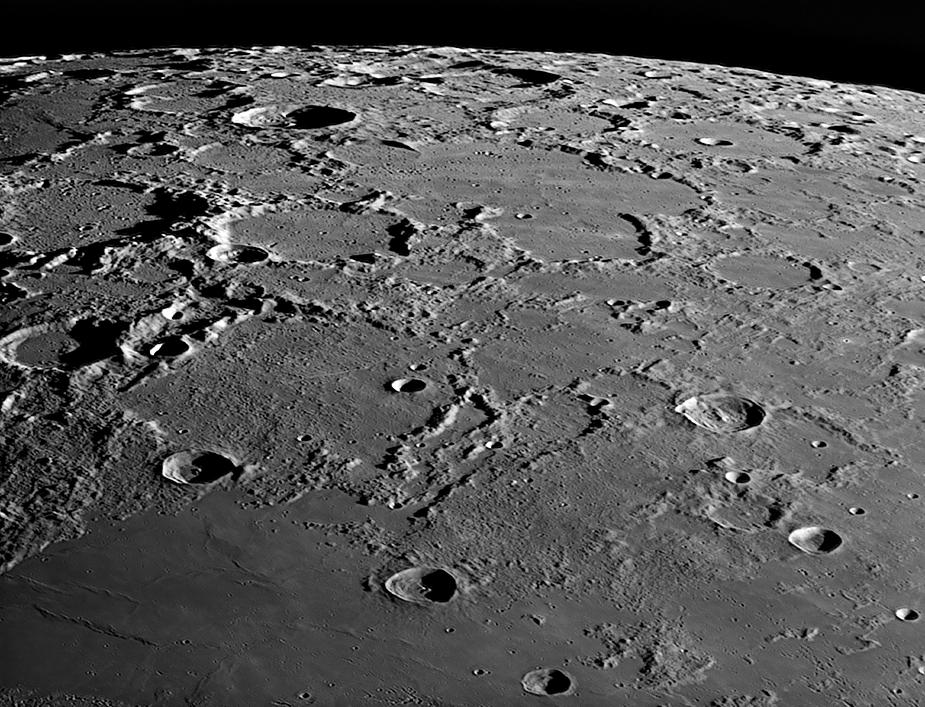

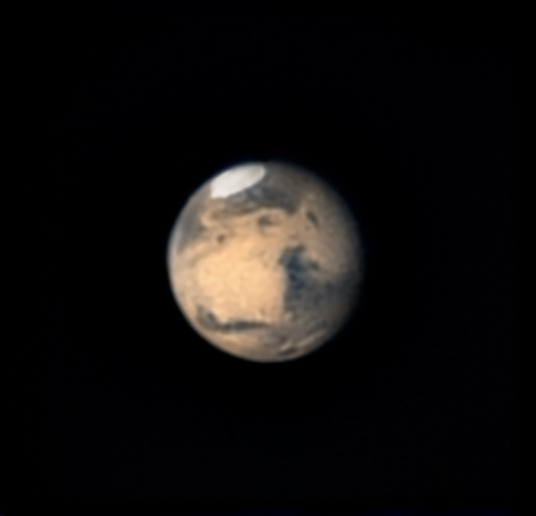

| A completely different type of camera is used for high detail images of planets and the moon. Here we use a camera capable of very short exposures (5 to 30 milliseconds). The reason is to “freeze the atmosphere” so the motion of the air doesn’t blur the image. Here we may take many thousands of images (at 10 milliseconds/exposure you can take 3000 images in 30 seconds). Using software we then compare the images, discarding the blurred ones and stacking only the sharpest (maybe the sharpest 10% or sharpest 300 images). This again is done with a monochrome camera using various filters for each exposure. This allows one to capture amazing detail but (because each image is so short) is pretty much limited to bright objects (the moon, planets and the sun). |

| Jupiter |

| Saturn |

| North Pole of the Moon |

| Mars |

| These images are of very high resolution. In the image of Clavius crater above the smallest craters you are seeing are about 800 to 900 meters in diameter. In case you are wondering you can't see the Apollo landing craft with earth-based scopes nor even with the Hubble Space Telescope. It can be seen in images taken from spacecraft orbiting the moon at low altitude, though. In the image of Juptier, below, you can even see detail on Jupiter's moon Ganymede. |

| Moon - Clavius Crater |

| Above is that same image of Ganymede, but shown in a larger scale. Ganymede is roughly the same size as our moon, but much much further ffrom us. Those markings on the surface are real. They correspond to features shown on the surface of Ganymede in images taken by spacecraft orbiting Jupitier. |